AI UGC for Ecommerce in 2026: The Complete Brand Guide

Topics: ai ugc for ecommerce · ugc video for shopify · ugc video for amazon ads · ai ugc product video · ai ugc for facebook ads

If you run an ecommerce brand in 2026, you already know the content treadmill. Every week, your ad sets need fresh creative. Every platform — Meta, TikTok, Amazon, Pinterest — wants native-format video. And the gap between how much content you need and how much you can afford to produce keeps getting wider.

The traditional options haven't improved: hiring UGC creators still takes 2–4 weeks and $350–$800 per video. In-house production means cameras, studios, and models. Agencies are expensive and slow to brief.

AI-generated UGC changes this equation fundamentally — but most coverage of the topic either oversells the technology or undersells the practical workflow. This guide cuts through both.

We'll cover exactly what AI UGC can and can't do for ecommerce brands in 2026, how Designkit's agent-powered generation works across three distinct modes, and what real DTC brands have achieved using it. By the end, you'll have a clear picture of whether — and how — it fits your content operation.

Quick definition: "AI UGC" in this article means video content generated by an AI agent using your brand assets (product images, text briefs, or reference videos) — designed to look and perform like authentic user-generated content in paid and organic social channels.

1. Why Traditional UGC Production Doesn't Scale

To understand where AI UGC fits, it helps to be precise about where the traditional model breaks down. There are four distinct friction points, and they compound.

The creator pipeline problem

The average UGC creator engagement involves 3–5 briefing rounds before shooting even begins, followed by one week of production and one week of revisions. For brands running performance creative at volume, this means a 3–4 week minimum cycle per video — and a marketing team spending 40% of its bandwidth on production coordination rather than optimization.

The in-house cost ceiling

Studio rental, model day-rates, and editing retainers stack up quickly. A production session that yields 5–8 usable clips typically runs $2,500 or more. When an ad underperforms, there's no fast iteration path — you're back at the top of the production queue.

The stock footage originality problem

Meta's 2026 originality scoring penalizes repetitive and generic creative more aggressively than at any point in the platform's history. Generic stock video now drives CTRs below 0.6% in most verticals. If your creative looks like everyone else's, the algorithm treats it accordingly.

The agency coordination problem

Agency rates average $650+ per deliverable, and communicating brand SOP is notoriously error-prone over written briefs. Two-to-three week production turnarounds mean flash-sale assets frequently miss the sale window.

The underlying issue isn't cost or quality in isolation — it's the combination of high cost, long lead times, and zero ability to iterate quickly. AI UGC addresses all three simultaneously.

None of this means professional creators or agencies have no place in an ecommerce brand's content mix. They do — particularly for hero brand content and high-production brand films. But for performance creative at volume, the math simply doesn't work at traditional production economics.

2. How AI UGC Actually Works in 2026 — Seedance 2.0 Under the Hood

Not all AI video generation is the same. Understanding what the underlying model can actually do — and what it can't — is essential for setting realistic expectations.

Designkit runs on Seedance 2.0, ByteDance's state-of-the-art video generation model (ELO 1269 on Artificial Analysis Arena as of Q1 2026). Here's what its core capabilities translate into for ecommerce use cases:

|

Seedance 2.0 Capability |

What it means for your ad account |

|

12-type multimodal input |

Feed product image + brand colors + model reference + scene description simultaneously. The output is brand-consistent from frame one — no manual asset placement. |

|

Precise first & last frame control |

Specify exactly what the opening hook looks like and what the final CTA frame contains — including brand logo position. No post-production required. |

|

<40ms audio lip-sync |

AI avatar voiceovers sync precisely without manual alignment. Cuts edit time by roughly 30% compared to standard dubbing workflows. |

|

15-second continuous single-shot action |

Outputs the 15-second TikTok Spark Ads standard format in one uninterrupted shot. No jump-cut stitching, no continuity breaks. |

|

Native 2K output |

Crop to 4:5 or 9:16 with zero quality loss. Meets Meta and TikTok recommended resolution specs natively. |

|

Multi-character/product consistency |

The same AI avatar can appear across 10 different shots in a campaign without breaking visual continuity or requiring re-casting. |

One important note on what AI UGC is not: Seedance 2.0 does not generate deepfake likenesses of real, identifiable people. AI avatars are original synthetic characters. This distinction matters for platform compliance, which we cover in Section 5.

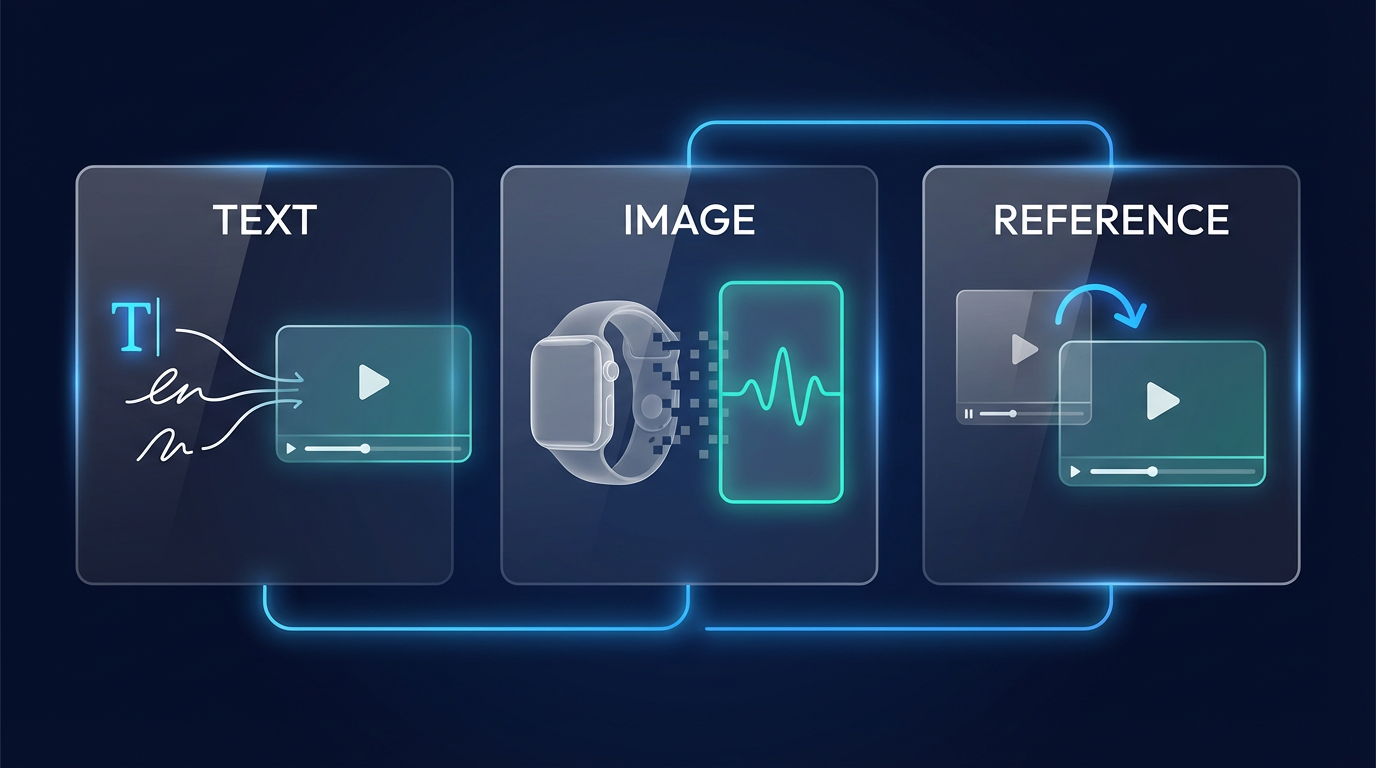

3. Three Generation Modes — and When to Use Each

Designkit's agent doesn't work from fixed templates. It generates video from your inputs — and there are three distinct modes depending on what assets you're starting from. Choosing the right mode for the right situation is the core skill to develop.

Mode 1 — Text-to-Video

You write a brief. The agent generates the entire video — script, visuals, scene composition, motion, and voiceover — from your words alone. No existing footage or product imagery required.

This mode is best suited to:

- Concept-first briefs where you know the creative angle but don't have assets yet

- Rapid A/B hook testing — write 5 different opening hooks and generate all 5 in one batch

- Brand awareness content where atmosphere and storytelling matter more than product close-ups

Example brief: "Open with someone discovering our skincare product on a bathroom shelf, surprised reaction, ASMR unboxing, ends with clear skin reveal. Warm morning light. 15 seconds. 9:16."

Practical tip

The more specific your brief, the more controllable the output. Vague briefs produce generic videos. Specifics like lighting mood, pacing, hook type, and scene transitions are all fair game.

Mode 2 — Image-to-Video

Upload 2–4 product photos. The agent analyzes them and generates a dynamic, platform-native UGC clip — adding motion, scene transitions, voiceover narration, and visual composition automatically.

This is the most widely used mode among ecommerce brands because the input barrier is so low: every brand already has product photography.

This mode works particularly well for:

- Shopify and Amazon catalog items where product photography already exists

- Before/After and product reveal formats where the visual sequence is the message

- Seasonal campaigns where you need volume quickly around a product line

- New SKU launches before any video production has been scheduled

Mode 3 — Reference Video Replication

Upload or link a high-performing UGC video — your own best-performing ad, or a competitor's viral post — and the agent recreates its underlying creative structure using your brand's assets. Same hook mechanics. Same pacing rhythm. Same scene architecture. Your product, your brand colors.

This mode is powerful for:

- Scaling a winning creative formula across new products or audiences without rebuilding from scratch

- Applying viral TikTok trends to your own product without needing trend-aware production talent

- Maintaining creative consistency across large ad sets while still generating unique variants

When to combine modes

Many experienced Designkit users combine modes across a single campaign: Text-to-Video for concept exploration, Image-to-Video for the core SKU set, and Reference Replication to clone the top performer across additional product lines.

4. Platform-Specific Workflows

The generation process adapts to your destination platform. Here's how it works in practice for the two most common ecommerce contexts.

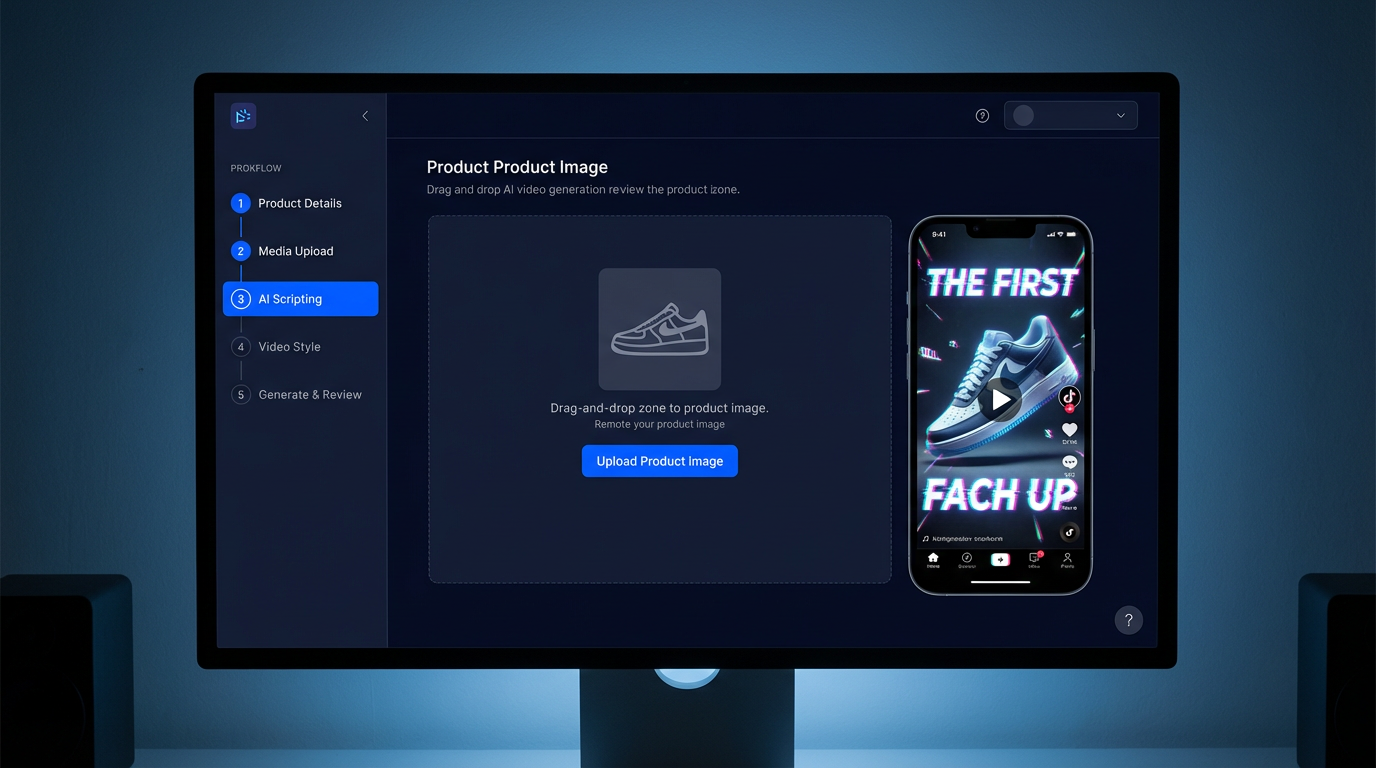

Shopify → Meta / TikTok Spark Ads (5-minute workflow)

This is the fastest path from product page to live ad. Most brands complete it in under five minutes on their first attempt.

|

Step |

Time |

What happens |

|

1 |

0:00–0:30 |

Install Designkit from the Shopify App Store. Select a SKU. The app auto-pulls the hero image, price, and alt-text — no manual upload. |

|

2 |

0:30–1:00 |

Choose your generation mode: Text-to-Video if you're briefing from scratch, Image-to-Video if you're working from product photos, or Reference Replication if you have a winning ad to clone. |

|

3 |

1:00–2:00 |

Upload brand logo and optionally a model reference image (or generate an AI avatar). The agent weaves these into every frame. |

|

4 |

2:00–4:00 |

Hit Generate. Seedance 2.0 produces three variants in 90–120 seconds — each with a different hook, pacing, or CTA treatment. Preview all three. |

|

5 |

4:00–5:00 |

Select your preferred variant. It saves to Shopify Media automatically and is immediately available for Meta Ads Manager and TikTok Ads Manager. |

Download: 5-Minute Shopify UGC Workflow — Screenshot Pack (PDF, 14 pp.) → designkit.com/resources/shopify-ugc-workflow

Amazon A+ and Main Image Video

Amazon's content guidelines are strict — pricing overlays, competitive comparisons, and unverified claims all trigger review rejection. Designkit's agent includes a built-in Amazon TOS compliance layer that screens every generation before download.

The five-step Amazon workflow:

- Upload 3 product angles (360° where possible) plus your ASIN if available — the agent auto-pulls your listing title and bullet points for context.

- Brief the agent: describe your product's core benefit, target customer, and desired tone. Or use Reference Replication to match the structure of a high-performing A+ video in your category.

- The agent generates two versions simultaneously: an A+ Content Module video and a Product Detail Page main image video — different specs, one generation job.

- Run the built-in compliance pre-check. The system flags outputs that touch Amazon's 10 restricted zones — pricing, competitor references, absolute superlatives, unverified health claims — before download.

- Download MP4 + thumbnail in Amazon's exact specification (1920×1080, H.264, 30fps, under 30 seconds). Upload directly to Seller Central.

Important: Designkit is currently the only Seedance 2.0 platform with a built-in Amazon A+ compliance checker. This matters: failed Amazon video reviews delay listing updates and, in some categories, suppress ranking during the review period.

5. Platform Compliance — Meta, TikTok, and Amazon

The most common question we hear from brands considering AI UGC: "Will our ads get flagged or suppressed because they're AI-generated?"

The straightforward answer is no — with three conditions. And understanding those conditions matters more than the yes/no.

The three universal requirements

- Use authentic brand assets. Always start from your real product images, logo, and brand colors. Seedance 2.0 generates original synthetic characters — it does not create deepfake likenesses of real, identifiable people. This is the single most important compliance principle.

- Produce a minimum of 3 hook variants per ad set. Meta's 2026 Advantage+ algorithm actively rewards creative diversity. Running identical creative across an audience group triggers originality penalties. Three distinct hook treatments per audience is the current baseline.

- Export at 1080p minimum / 2 Mbps bitrate. Low-bitrate uploads trigger automatic quality suppression on both Meta and TikTok. Designkit exports at 2K by default.

Platform-specific notes

Meta / Instagram: Advantage+ Creative evaluates AI-generated videos on the same quality signals as filmed content. The generation method is not a ranking factor. Designkit outputs consistently score 'High Quality' in Meta's creative scoring tool when brand assets are properly provided.

TikTok Spark Ads: TikTok's June 2025 policy update explicitly permits AI-generated video under Spark Ads, provided real product assets are used and an AI disclosure is included. Designkit adds an optional disclosure overlay on export.

Amazon: Amazon's compliance risk is content-based, not generation-method-based. The compliance questions are identical whether you filmed the video or generated it: does it contain pricing, competitive comparisons, or unverified claims? The built-in pre-check handles this automatically.

Pre-launch compliance checklist

❑ No unauthorized likenesses of real, identifiable people

❑ No competitor logos or trademarks visible in-frame

❑ No absolute superlatives in voiceover ('best', 'number one', '#1') without supporting substantiation

❑ AI-generated disclosure label included where required (EU, UK, California, and select US states)

❑ Product claims documentation on file for health, finance, and supplement categories

6. What Real Brands Have Achieved — Three Case Studies

The following cases are drawn from Designkit customer campaigns. All data points are verified against platform analytics or provided directly by the brand.

Peachtree Home Goods — Scaling Meta Ad Volume Without Scaling Headcount

Peachtree is a Shopify-native home goods brand generating roughly $180K monthly GMV. Their content problem was straightforward: they needed 60 UGC videos per month to sustain their Meta ad frequency strategy, and their existing agency was charging $650 per video.

That's a $39,000/month production budget — workable at their revenue level, but leaving almost no margin for creative testing. When a concept underperformed, they had no budget left to iterate.

Switching to Designkit's Image-to-Video mode, a single operator now maintains the full 60-video monthly output using their existing product photography catalog. The results after 90 days:

|

CAC |

Monthly production cost |

ROAS |

|

↓ 27% |

$39,000 → $5,400 |

2.1 → 3.4 |

Glowly Beauty — Solving Amazon A+ Review Rejection

Glowly is an Amazon FBA beauty brand. Their problem was specific: their self-produced A+ videos had a sub-40% first-review pass rate. Each rejection reset a 3–5 business day review cycle and suppressed new listing momentum.

They switched to Designkit's Image-to-Video mode with the built-in Amazon TOS compliance pre-check enabled. Product photos feed directly to the agent; every output is screened against Amazon's 10 restricted content categories before download.

|

First-review pass rate |

Detail page conversion rate |

|

<40% → 92% |

4.8% → 6.7% |

PawSnack Pet Treats — 200 TikTok Assets in 30 Days

PawSnack needed 200 TikTok UGC assets in 30 days for a new product launch — a volume that would have required simultaneously managing 20–30 UGC creators to achieve through traditional channels.

Using all three generation modes in combination — Text-to-Video for concept exploration, Image-to-Video for the product-photo-driven SKU set, and Reference Replication to clone their own top-performing TikTok ads across new products — they hit 200 videos in 3 working days via batch API.

|

Viral breakouts (VV > 1M) |

Launch-month GMV |

Time to 200 assets |

|

2 videos |

$112,000 |

3 working days |

Case study data provided directly by brands and verified against platform analytics. Individual results vary.

7. AI UGC vs Human Creators vs Agency — An Honest Comparison

AI UGC is not the right choice for every situation. Here's an honest breakdown of where each model works best, without the promotional spin.

|

|

AI UGC (Designkit) |

Human Creator |

Outsourced Agency |

|

Cost per video |

$9 (60 sec) |

$350 – $800 |

$250 – $650 |

|

Delivery time |

2 – 5 minutes |

2 – 4 weeks |

1 – 3 weeks |

|

Monthly capacity |

Unlimited |

5 – 10 videos |

20 – 40 videos |

|

Brand consistency |

★★★★★ (asset lock) |

★★ (personal style) |

★★★ |

|

Perceived authenticity |

★★★★ (82% blind test) |

★★★★★ |

★★★ |

|

Iteration speed |

Minutes |

Weeks |

Days to weeks |

|

Compliance risk |

Low — no real portraits |

High — contract risk |

Medium |

|

Best for |

≥ 20 videos/month |

≤ 5 premium videos |

5 – 30 videos |

Authenticity figure from Designkit internal blind study: 200 DTC marketing professionals evaluated videos generated by Seedance 2.0 using product images only. March 2026.

The honest answer is that AI UGC and human creators occupy different roles. AI UGC wins on volume, speed, and cost at scale. Human creators win on nuanced storytelling and organic audience trust — particularly in early-stage community building. The best-performing brands in our network use both.

Where AI UGC is not the right choice: early-stage brand identity work, premium lifestyle campaigns where high production value is the message itself, or categories where authentic human testimony is a hard purchase driver (e.g., complex health products with nuanced efficacy claims).

AI UGC in 2026 is no longer a novelty experiment. For ecommerce brands producing more than 20 videos a month — or any brand that wants to get to that volume — it represents a genuine structural change in how performance creative gets made.

The three-mode approach (Text-to-Video, Image-to-Video, Reference Replication) means there's an entry point regardless of your starting assets. Most brands find that Image-to-Video, fed by existing product photography, delivers the fastest time to first result. From there, Reference Replication becomes the primary scaling lever once you have a winning creative formula to clone.

What AI UGC won't do: replace the creative judgment that determines what to make. The agent executes; the brief still matters. Brands that invest in writing clear, specific briefs consistently outperform those that treat generation as a black box.

The brands winning with AI UGC in 2026 aren't the ones using the most sophisticated tools — they're the ones with the clearest understanding of what their audience responds to, and the production velocity to test it at scale.

Ready to try it? Designkit's first three generations are free — no credit card required.

Generate my first UGC video free →

This article was written and reviewed by the Designkit editorial team. Data and benchmarks cited reflect Designkit customer campaigns (Jan–Mar 2026, n > 4,000 ad sets) unless otherwise noted. Individual results vary. Platform policies referenced are current as of April 2026 and are subject to change.

Frequently Asked Questions

Will AI-generated ads get flagged or suppressed on Meta or TikTok?

I only have product photos — no video footage. Is that enough?

Yes. Seedance 2.0's Image-to-Video pipeline is purpose-built for exactly this. Two to four product photos plus an optional model reference is typically sufficient to generate a 15-second, platform-native UGC video.

How does this compare to Meta Advantage+ AI-generated creative?

Meta Advantage+ generates layout and color variations from your existing creative assets. Designkit generates entirely new videos from scratch — from a text brief, product photos, or a reference video. The two tools solve different problems and work well in combination.

Can I batch-generate large volumes at once?

Yes. The Team plan supports batch API with up to 200 concurrent generation jobs per workspace. A 200-video batch typically completes in under 3 hours.

What file formats does Designkit export?

MP4 (H.264 / H.265), MOV ProRes, WebM. Up to 2K resolution, 30 or 60 fps, adjustable bitrate from 2 to 100 Mbps. All formats meet Meta, TikTok, Amazon, and Pinterest spec requirements natively.

Do I need to label ads as AI-generated?

In the EU, UK, and California, disclosure is required for realistic human portrayals. Designkit's export flow includes an optional AI-disclosure overlay. Regulations are evolving; we recommend checking current requirements in your specific market before launching.

What's the cost to produce 20 UGC videos a month?

On the Lite plan: $0.15/second × 15 seconds × 20 videos = $45/month. Pro plan at $0.62/second for hero-quality output — typically used selectively for top-performing creative. Compare this to a $9,000/month agency budget for the same volume.

Is my product imagery kept private?

Yes. All uploaded assets are stored in your private workspace. Customer data is never used to train models, and assets are not shared across accounts.

Do you support agency accounts for multiple clients?

Yes. The Team plan includes per-client workspaces, SSO/SAML authentication, and white-label export. Designed for creative agencies managing performance content at scale.

What happens if a generated video comes out low quality?

Failed generations trigger an automatic credit refund within 24 hours. Manual quality complaints are reviewed within 48 hours with a guaranteed credit decision.

You May Also Like

How to Create AI Fashion Models: Complete Step-by-Step Guide

How to Use AI Fashion Models for E-Commerce Product Photos: A Complete 2026 Guide

How to Virtually Try On Clothes Online: A Complete Guide

How to Create Outdoor Product Scenes Without a Photoshoot Using AI

GPT-Image-2 for Ecommerce Product Images: What It Means for Online Stores

Optimize Etsy Images for Mobile: Listing Photos That Convert

Amazon 7-Image Listing Strategy: Boost Sales by Optimizing Every Image

Happy Horse 1.0: The AI Video Model E-Commerce Sellers Need to Know About

Etsy Thumbnail Optimization: Keep Your Product Fully Visible

AI UGC for Ecommerce in 2026: Text-to-Video, Image-to-Video & Reference Replication Guide

The Etsy Thumbnail Formula That Drives More Clicks and Sales

HappyHorse AI Review: Features, Benchmarks & How to Use It

How to Create Pro Amazon Listing Photos Without a Studio

10 High-Converting Lifestyle Product Images for Amazon Listings

How to Automate Your E-commerce Visuals with OpenClaw Workflow

Top 5 AI Tools to Generate Amazon Product Listing Images in 2026

How to Turn One Photo into 10 Etsy Listing Images Fast: Batch Workflow

Etsy Listing Photo Size Guide (2026): Mobile, Desktop & Shop View Specs

Turn White Background Images into Lifestyle Amazon Listings

Fix Etsy Image Cropping: Optimize Listing Photos for Every Device

Amazon Listing Images: Essential Shot List & Optimization Guide

How to Create Lifestyle Product Images Without a Studio

Seedance 3.0 Predictions: Will AI Video Enter the Feature Film Era?

Grok AI vs ChatGPT: Features, Pricing & Best Choice (2026)

How to Use Grok AI: Features, Tips & Best Prompts (2026 Guide)

Sora Is Shutting Down? Best AI Video Alternatives for Creators in 2026

Lifestyle Product Photography Trends 2026: Authentic Looks at Scale

How to Master Lifestyle Product Photography in 2026

Amazon Listing Images Guide 2026: 7-Slot Strategy & Requirements

360 Product Photography Guide 2026: Setup, Shoot and Workflow

Beginner's Guide to Generate Product Photos from Different Angles

AI Product Photography: Create Ecommerce Images Without a Photoshoot

How to Create Realistic AI Product Photos: Step-by-Step Pro Guide

Keep AI Product Images Consistent Across SKUs: Lighting, Color, Background

Seedance 2.0 for Ecommerce: Create AI Product Videos in Minutes

Seedance 2.0 Review: Features, Improvements, Pricing & How It Works (2026)

How to Scale E-commerce with a Product Image Generator

How to Use AI for Spring Product Photography: 2026 Amazon Guide

How to Create a Budget Home Product Photography Setup with AI

How to Achieve Professional Product Photography Standards in 2026

How to Take Product Photos with Phone: Pro Guide for Amazon & AI

Best AI Product Image Generator 2026: Top Tools for Amazon Sales

Amazon Photography Service vs. AI Generator: Best Choice for Sales

What Is Product Photography? Angles, Lighting & Editing Guide

Amazon Spring Sale 2026: Create High-Converting Listing Images (Guide)

AI Agents Transform Amazon Product Photography in 2026

Amazon Photoshoot in 2026: Do You Still Need a Studio?

11 Must-Have Tools for Amazon Product Photography in 2026

2026 Amazon Image Trends Shaping Seller Success and Conversions

Amazon Product Photography Requirements & Best Practices 2026

Make every product image ready to sell

Designkit is an all-in-one AI platform for ecommerce visuals. Create product photos, AI videos, virtual try-ons, and Amazon listing images in seconds. Generate HD backgrounds, batch edit photos, and scale your brand with studio-quality content.