HappyHorse AI Review: The Video Model That Topped the Leaderboard — What It Actually Means for Creators

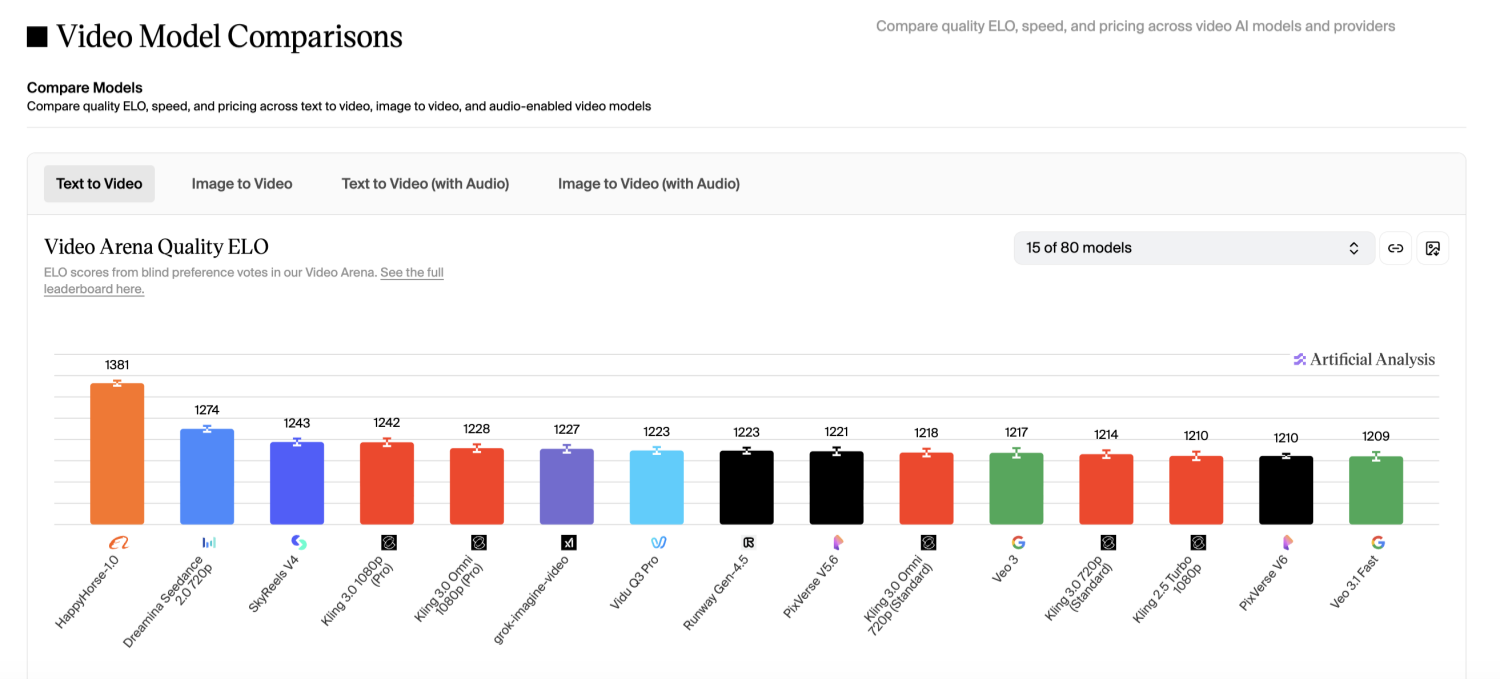

In early April 2026, a previously unknown AI video model called HappyHorse 1.0 appeared at the top of the Artificial Analysis Video Arena — an independent benchmark that evaluates text-to-video and image-to-video models using blind human preference testing. According to data from the Artificial Analysis leaderboard, HappyHorse claimed the #1 position in both the text-to-video (no audio) and text-to-video (with audio) categories, recording an Elo score of approximately 1,370–1,389.

Part 1: What HappyHorse AI Is and Why It's Making Headlines

1.1 HappyHorse 1.0 Tops the AI Video Leaderboard

What made the story unusual wasn't just the ranking — it was the absence of any public announcement, known company, or prior product history. Within days, the model had attracted significant attention across AI research communities and developer forums, with many treating it as a signal that a new competitive tier in AI video generation had arrived.

Unlike most major releases backed by large labs or public funding rounds, HappyHorse surfaced quietly, with a minimal website and no press coverage at launch. That contrast — strong benchmark performance, near-zero marketing — is part of what drove curiosity and, subsequently, organic search volume for the model's name.

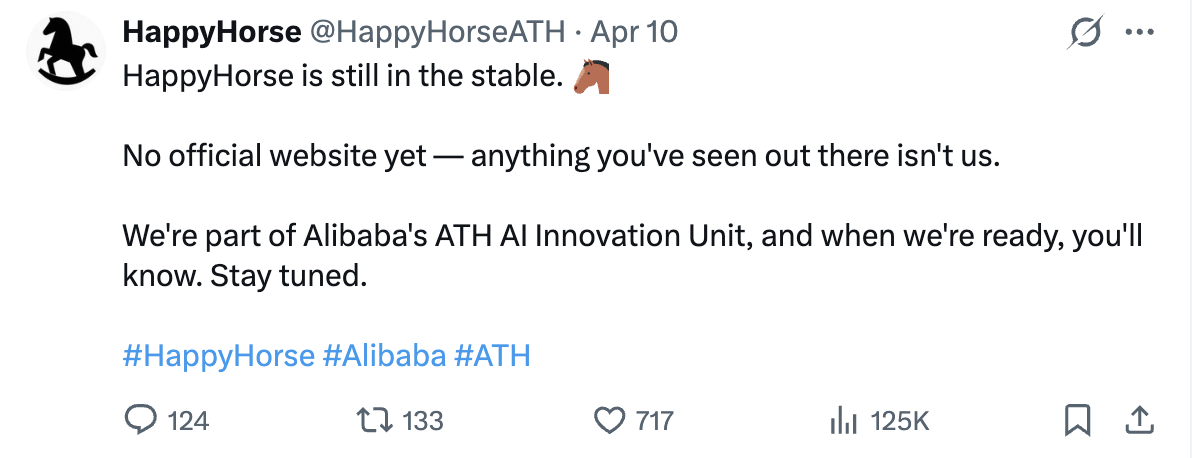

1.2 The Team Behind This Mysterious Model

No official developer information was published at launch. Based on reporting from multiple AI-focused outlets (Source), circumstantial evidence points toward a team with roots in Chinese AI development — with speculation linking the project to former personnel from Kuaishou's Kling video model team and Alibaba's research division. As of publication, neither team membership nor institutional backing has been formally confirmed. This ambiguity is worth noting: it adds both intrigue and uncertainty to any long-term adoption decision.

Part 2: Core Features of HappyHorse 1.0

2.1 Native Audio and Video in a Single Pass

The most technically significant feature of HappyHorse 1.0 is its joint audio-video generation — meaning dialogue, sound effects, and ambient audio are produced simultaneously with the video output, not added in post-processing. This differs from most current models in the space: tools like Sora 2 and Runway Gen-3, for example, either exclude audio entirely or require separate audio editing workflows.

For content creators and marketers, this distinction has practical consequences. A workflow that normally requires video generation → audio recording or synthesis → timeline editing → audio sync can be compressed into a single prompt-to-output step. The efficiency gain is real, particularly for high-volume short-form video production where iteration speed matters.

HappyHorse's architecture uses a unified transformer approach that processes video and audio tokens in the same generation pass. This is consistent with a broader trend in multimodal model design — one that Veo 3 (Google DeepMind) has also pursued with its spatial audio capabilities.

2.2 Multilingual Lip Sync Across Six Languages

HappyHorse 1.0 supports prompting and output generation in six languages: English, Chinese (Mandarin), Japanese, Korean, German, and French. More notably, the model includes native phoneme-level lip synchronization for each supported language — meaning on-screen characters' mouth movements are aligned to the generated speech in the target language, not simply mapped from an English base.

For teams producing multilingual content — localized ad campaigns, educational videos, or social media content across regional markets — this removes a common friction point that typically requires dedicated lip-sync tools or manual correction in post. It is one of the clearest differentiators between HappyHorse and most Western-developed video models currently available.

2.3 1080p Output, Complex Motion, and Multi-Shot Coherence

HappyHorse 1.0 generates video natively at 1080p / 30 FPS, with an average inference time of approximately 10 seconds per clip. The model's architecture includes specific handling for complex motion scenarios — physical interactions, crowd scenes, and multi-person compositions — areas where many text-to-video models still struggle with temporal consistency.

A feature the HappyHorse team highlights is multi-shot narrative coherence: the ability to maintain consistent character appearance, lighting, and spatial relationships across multiple generated clips within the same session. This is directly relevant to anyone producing structured video content (product demos, explainer videos, narrative advertising) rather than standalone clips.

- Model parameters: 15 billion (unified video + audio transformer)

- Output resolution: Native 1080p HD, 30 FPS

- Inference speed: ~10 seconds average (8-step process)

- Languages: EN / ZH / JA / KO / DE / FR with native lip sync

- Generation modes: Text-to-video, Image-to-video

Part 3: HappyHorse vs. Sora, Kling, and Runway

3.1 Side-by-Side Feature Comparison

| Feature | HappyHorse 1.0 | Sora 2 | Kling 3.0 | Runway Gen-3 |

|---|---|---|---|---|

| Max Resolution | 1080p | 4K | 1080p | 1080p |

| Native Audio Generation | Joint (video + audio) | No | Partial (newer versions) | No |

| Multilingual Lip Sync | 6 languages | No | Limited | No |

| Open Source | Announced, pending | Closed | Closed | Closed |

| API Access | In development | Available | Available | Available |

| Leaderboard Rank (AA Arena) | #1 (April 2026) | Top 5 | Top 5 | Top 10 |

Source: Artificial Analysis Video Arena. Rankings reflect April 2026 snapshot and are subject to change.

3.2 Where HappyHorse Has a Real Edge

Three areas stand out where HappyHorse offers something the established models do not combine in a single tool:

- Unified audio-video output — eliminates a separate post-production step that most current workflows still require.

- Multilingual native lip sync — a genuine gap in Western-developed models, relevant to any global content operation.

- Open-source trajectory — if weights are released as promised, HappyHorse would become the only top-ranked model available for self-hosting and fine-tuning, a significant advantage for enterprise and research use cases.

3.3 Where It Still Falls Short

An honest assessment requires noting the limitations:

- The API and model weights have not been released as of this writing — the open-source commitment remains a future promise, not a current reality.

- Maximum resolution is 1080p; competitors like Sora 2 and Veo 3 offer 4K output, which matters for broadcast and cinema applications.

- Developer identity is unconfirmed, which creates institutional uncertainty for teams that require vendor accountability or SLA guarantees.

- Long-term uptime, rate limits, and pricing have not been publicly detailed.

Part 4: How to Start Using HappyHorse AI Today

4.1 Access Options Right Now

HappyHorse's official API and downloadable model weights are not yet publicly available. However, there are several ways to test the model's output quality before committing to any production workflow:

- Artificial Analysis Video Arena (artificialanalysis.ai) — blind head-to-head testing against other models, no account required. Useful for unbiased quality comparison.

- Official site of HappyHorse AI — No official website yet.

For teams evaluating HappyHorse for production use, the recommended approach is to run a controlled batch of test prompts — ideally matching your actual content use case — across HappyHorse and at least one established alternative (Kling or Runway), then compare results side by side before the API becomes available.

Part 5: Who Should Actually Use HappyHorse AI

Not every team needs the same thing from an AI video model. Here's a straightforward breakdown based on current capabilities:

- Content creators and early adopters: Worth testing now. The leaderboard performance is verifiable, the demo access is free, and understanding its strengths before the API launches puts you ahead of the adoption curve. Treat it as a quality benchmark, not yet a production dependency.

- Multilingual and global marketing teams: HappyHorse's native audio and six-language lip sync capability addresses a real workflow gap. If your team regularly produces localized video content across multiple languages, this model's architecture is directly relevant — monitor the API release closely.

- Developers and researchers: The open-source commitment, if fulfilled, would make HappyHorse the only top-ranked model available for self-hosting and fine-tuning. Worth tracking the GitHub repository and Hugging Face page for weight releases.

The overall picture: HappyHorse 1.0 represents a genuine technical advance in joint audio-video generation and multilingual output. For production use, the responsible decision is to pilot it now and commit when the API is stable. The underlying model quality appears to justify the wait.

Conclusion

HappyHorse AI is one of the more technically interesting developments in the AI video generation space in 2026 — not because of marketing, but because of benchmark performance and a genuinely differentiated architecture. Its native joint audio-video generation and multilingual lip sync capabilities address real gaps in the current landscape. The open questions around team identity, API availability, and long-term support are legitimate reasons to hold off on deep integration. But they're not reasons to ignore it. Bookmark this model, run the demo tests, and revisit when the weights drop.

References

Frequently Asked Questions

Is HappyHorse AI getting released?

Has HappyHorse released its model weights or API?

As of April 2026, neither the open-source model weights nor a public API have been released. The team has stated both are coming, but no confirmed release date has been announced.

How does HappyHorse compare to Kling for audio generation?

HappyHorse generates audio natively in the same pass as the video — dialogue, sound effects, and ambient audio are produced together. Kling's newer versions have introduced some audio features, but the integration is not equivalent to HappyHorse's joint generation architecture.

You May Also Like

Happy Horse 1.0: The AI Video Model E-Commerce Sellers Need to Know About

AI UGC for Ecommerce in 2026: Text-to-Video, Image-to-Video & Reference Replication Guide

HappyHorse AI Review: Features, Benchmarks & How to Use It

Seedance 3.0 Predictions: Will AI Video Enter the Feature Film Era?

Grok AI vs ChatGPT: Features, Pricing & Best Choice (2026)

How to Use Grok AI: Features, Tips & Best Prompts (2026 Guide)

Sora Is Shutting Down? Best AI Video Alternatives for Creators in 2026

Seedance 2.0 for Ecommerce: Create AI Product Videos in Minutes

Seedance 2.0 Review: Features, Improvements, Pricing & How It Works (2026)

Make every product image ready to sell

Designkit is an all-in-one AI platform for ecommerce visuals. Create product photos, AI videos, virtual try-ons, and Amazon listing images in seconds. Generate HD backgrounds, batch edit photos, and scale your brand with studio-quality content.