Seedance 3.0 Predictions: Breaking the 10-Minute Barrier in AI Video Production

AI video generation has made remarkable strides over the past two years — but for anyone who's tried to produce anything longer than a social clip, the limitations are painfully familiar. Most tools cap output at 10–20 seconds. Post-production dubbing workflows are clunky, expensive, and rarely convincing. And for independent creators or small brand teams, the per-generation cost still makes serious iteration feel out of reach.

That's why the speculation around Seedance 3.0 has caught so many people's attention. Across Reddit threads, developer Discord servers, and creator community forums, a consistent set of technical directions has been surfacing — attributed to sources described as close to the project. These aren't official announcements. No launch date has been confirmed. But the Seedance 3.0 predictions are specific enough, and consistent enough across sources, to merit a serious look.

This article is a predictions roundup and credibility analysis — not a product announcement. We'll walk through four major rumored features, apply a structured framework for evaluating each one, compare the predictions against Seedance 2.0's baseline, and give you a checklist to run your own tests when the model eventually drops. Here's what we'll cover:

- 10-minute continuous video generation with Narrative Memory Chain

- Native multilingual emotional voiceover with lip sync

- Cinema-grade storyboard and real-time directing tools

- A predicted cost reduction to 1/8th of Seedance 2.0

Part 1: What We're Hearing About Seedance 3.0 — And How to Evaluate It

The four core Seedance 3.0 predictions circulating in Reddit threads and developer communities can be summarized as:

- Unlimited continuous generation: 10+ minute seamless video output; internal tests reportedly reached 18 minutes without noticeable quality degradation

- Narrative Memory Chain: An architecture that retains plot continuity, character traits, and scene settings across long sequences, automatically structuring multi-act narratives

- Native multilingual emotional voiceover: End-to-end jointly trained lip sync supporting Chinese, English, Japanese, and Korean, with auto-adjusted tone, breathing, sobbing, and laughter

- 1/8th the cost of Seedance 2.0: Via next-generation model distillation and inference optimization

These predictions come from sources described as close to the project, shared across community forums. Before treating any of them as fact, it's worth building a framework for how to evaluate them.

1.1 A Credibility Checklist: How to Evaluate These Predictions

Not all predictions are equal. Here's a four-point framework for scoring each claim:

- Source type: Is this from a core team member, a contractor/adjacent contributor, or third-hand? Primary sources carry more weight.

- Reproducible experiment description: Does the claim include measurable specifics? ("18 minutes without noticeable degradation" is more credible than "much longer videos.")

- Consistency with current tech trajectory: Does the claim align with where the broader AI video field is heading? Predictions that follow known research directions are more plausible.

- Comparative baseline: Does it reference a verifiable baseline? ("1/8th of 2.0 cost" implies a known denominator.)

Credibility Rating Template

- High: 3–4 criteria met, primary source, specific metrics cited

- Medium: 2–3 criteria met, secondary source but technically consistent

- Low: Vague claim, no specifics, no clear source lineage

Each feature prediction in later sections is rated using this framework.

Part 2: Predicting Seedance 3.0's Biggest Feature — 10-Minute AI Video and Narrative Memory

The single most impactful prediction — and the one that would most fundamentally change what AI video means for professional production — is AI long-form video generation. Today's leading tools operate in short bursts. Most commercial-grade generators are capped at 10–30 seconds per clip. Stitching those clips into something coherent requires significant manual intervention: matching character appearance, maintaining scene continuity, managing pacing.

According to sources discussed in the community, Seedance 3.0 is being developed with a Narrative Memory Chain AI architecture — a mechanism that maintains a persistent representation of characters, settings, and plot state across the full generation context. Rather than treating each segment in isolation, the model reportedly tracks who is in the scene, what they're wearing, where the story is in its arc, and how the visual tone should evolve.

The technical logic is consistent with where long-context AI research has been heading. Similar memory-augmented approaches have been explored in language models; applying that paradigm to video generation — with additional constraints for visual consistency — is a logical next step. The claim of 18-minute stable output is specific and measurable, which raises its credibility.

Credibility Rating: Medium–High

The architecture direction aligns with known research trends. The specific 18-minute benchmark is cited with enough detail to be testable. Source attribution remains secondhand.

2.1 What 10-Minute Continuous Generation Would Actually Enable

If this prediction holds, here are five production use cases it would unlock — each with a concrete success criterion:

- Independent short films — Success criterion: character appearance consistency across scenes and cuts. A 10-minute AI film is only viable if the protagonist looks the same in minute 1 and minute 9.

- Long-form TVC ads — Success criterion: brand tone and visual language maintained across narrative beats, without manual keyframe intervention.

- Game cinematics — Success criterion: smooth scene transitions between environments and action sequences without jarring visual discontinuities.

- YouTube narrative series — Success criterion: episode-level pacing control, with the model respecting story structure rather than generating random visual noise.

- Tutorial long-takes — Success criterion: instructional clarity maintained as on-screen demonstrations evolve over several minutes.

Prompt structure suggestion for long-form generation:

[Concept sentence: one-line premise]

→ [Character and world setup: who, where, what they look like]

→ [Three-act framework: setup beat / conflict beat / resolution beat]

→ [Shot rhythm instructions: pacing preference, camera movement style, cut frequency]

For e-commerce teams already working with AI design tools, platforms like Designkit — an E-Commerce Design AI Agent with native Seedance integration — can connect product assets directly to long-form video workflows without additional setup, making the transition to longer-format content more practical for product-focused teams.

2.2 How to Test Long-Form Stability When Seedance 3.0 Drops

When Seedance 3.0 becomes available, run this checklist before committing to production workflows:

- Character appearance drift: Generate a 5+ minute clip featuring a named character. Compare facial features, clothing, and hair in the opening and closing segments.

- Prop and costume consistency: Introduce a specific prop in scene one (e.g., a red bag). Check whether it persists through subsequent scenes.

- Dialogue and lip/action sync: Generate a spoken dialogue sequence. Check for mouth movement accuracy and emotional expression alignment.

- Quality degradation in mid-to-late segments: Does visual fidelity hold up after minute 5? Look for blur artifacts, color inconsistencies, or compositional breakdowns.

- Camera direction controllability: Issue explicit directorial instructions (dolly in, wide shot, close-up on hands). Measure how reliably the model follows them.

Log your test parameters, prompts, and output samples. Sharing these in community threads will help build a collective benchmark baseline faster than any single team can do alone.

Part 3: The Multilingual Voiceover Prediction — Emotional Dubbing Built Into the Model

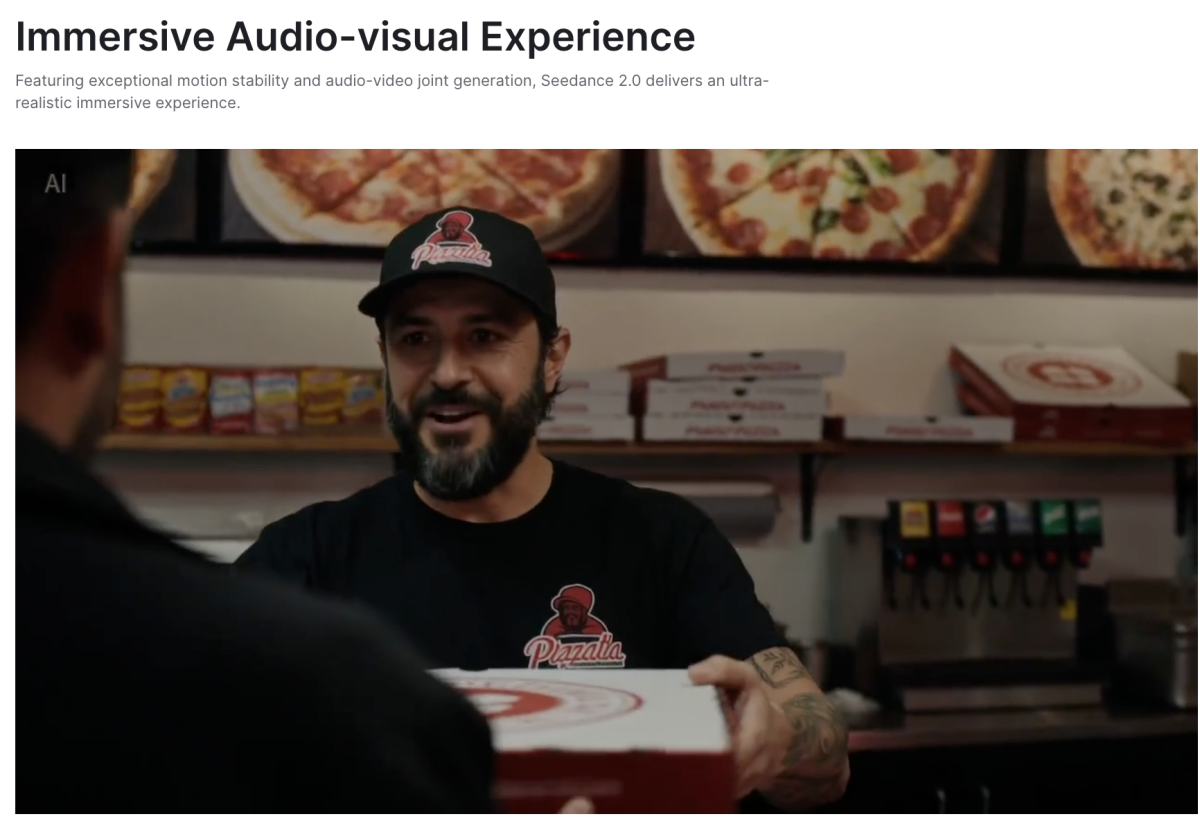

Current AI video workflows handle audio in a fundamentally awkward way: generate the visual first, run it through a text-to-speech engine, then attempt to align the lip movements in post. The results are often passable for low-stakes content but break down immediately under scrutiny — unnatural pauses, robotic emotional delivery, and lip sync that drifts in and out of alignment.

The prediction for Seedance 3.0 is a different architectural approach: end-to-end joint training of visual and audio streams, so voiceover isn't added after the fact but generated alongside the video as a unified output. According to community discussion, this AI multilingual voiceover system would support Chinese, English, Japanese, and Korean natively, with automatic adjustment of emotional prosody — including breathing patterns, sobbing, laughter, and tonal shifts.

One detail that circulated in community discussions: a martial arts test clip was reportedly generated using this system, and reviewers described the voice performance as matching the quality of professional voice actors. That's a strong claim, but it's a specific and testable one.

The content types that would benefit most: dramatic dialogue scenes, product explainer videos where speaker credibility matters, cross-border e-commerce brand films targeting multiple regional markets, and character IP short dramas where consistent vocal identity is as important as visual consistency.

Credibility Rating: Medium

End-to-end audio-visual joint training is technically sound and aligns with multimodal research directions. The "professional voice actor" comparison is compelling but comes from a single reported test clip — wide-scale verification is needed.

3.1 What to Watch For: Voice Quality, Sync Accuracy, and Compliance

When evaluating multilingual voiceover quality, use these six dimensions:

- Lip sync accuracy: Does mouth movement match phoneme timing, or does it drift during fast speech or emotional peaks?

- Pronunciation naturalness: Does the synthesized voice handle regional accent variation, idioms, and language-specific rhythm?

- Emotional consistency: Does the vocal performance stay in character across scene changes and tonal shifts?

- Background noise and mix quality: Is the voice cleanly separated from ambient audio, or does bleed create a muddy mix?

- Cross-language rhythm differences: Languages like Japanese and English have different speech rhythms. Does the model adapt, or does it force one language's pacing onto another?

- Silence and breath handling: Natural speech includes micro-pauses and breath sounds. Flat, unbroken delivery is a telltale sign of synthetic audio.

Compliance notes: As AI voiceover becomes more capable, compliance obligations multiply. Key areas to address:

- Portrait and voice rights: If the voiceover is modeled on a real person's voice, rights clearance is required in most jurisdictions.

- Brand and ad compliance: Regulated industries (finance, health, pharmaceuticals) have specific rules about AI-generated spokesperson content.

- Deepfake identification: Platforms increasingly require labeling of AI-generated audio-visual content. Build labeling into your workflow from the start, not as an afterthought.

For cross-border e-commerce brands navigating these compliance requirements, platforms like Designkit — an E-Commerce Design AI Agent already integrated with Seedance — are designed to help teams produce brand-ready video content within established creative guardrails, without requiring deep prompt engineering expertise.

Part 4: Directing Tools, Cinematic Presets, and the "1/8 Cost" Prediction

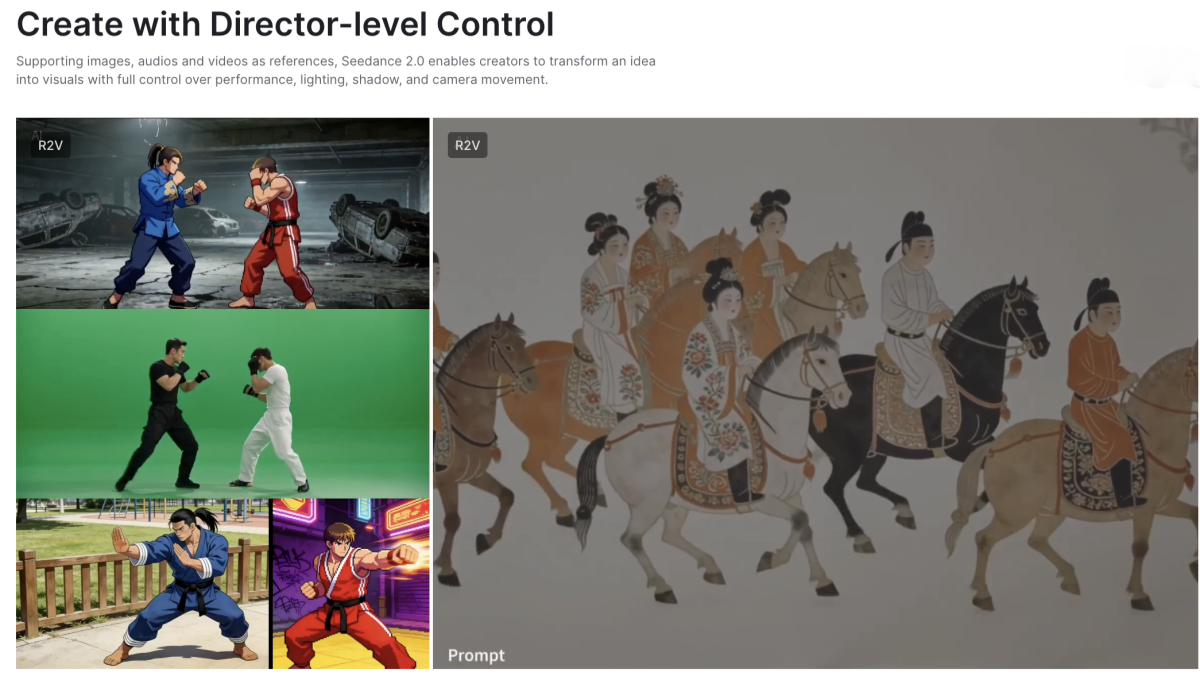

Cinema-Grade Directing Tools

The third major prediction is controllability — specifically, cinema-grade directing tools that go beyond text prompts into structured storyboard and real-time command input. This is what would make Seedance 3.0 a true AI storyboard generator for professional use.

Storyboard script input format example:

Shot 1: Wide-angle dolly as hero rises from the rubble. Duration 6s. Color preset: Film grain. Cut to—

Shot 2: Close-up on hands gripping the ledge. Duration 3s. No music. Cut to—

Shot 3: Over-the-shoulder POV looking down into the city. Duration 8s. IMAX grade.

Real-time directing command types (as predicted):

- Shot type selection (wide, medium, close-up, extreme close-up, POV)

- Camera movement (dolly, pan, tilt, handheld, static)

- Editing rhythm (cut pace: slow/medium/fast; match cut vs. hard cut)

- Color and look presets (IMAX, film-style, Netflix grading standards)

Why controllability is the decisive threshold for commercial use: without it, AI video remains a generative toy. With it, it becomes a production tool. The moment a director can issue precise cinematic instructions and have the model reliably execute them, AI video enters professional workflows permanently.

The "1/8 Cost" Prediction

According to community discussion and analysis of AI infrastructure trends, the AI video cost 2026 reduction prediction is attributed to two technical mechanisms: next-generation model distillation (compressing a large model's capabilities into a smaller, faster architecture) and efficient inference optimization (reducing the compute required per generation step).

Budget-thinking framework for three user types:

- Independent creators: 1/8 cost means iteration frequency multiplies. Instead of generating 3 versions of a scene, you can generate 20+, enabling prompt refinement cycles that were previously cost-prohibitive.

- Studios doing bulk long-form production: Cost reduction directly changes the economics of using AI for full-episode generation. What previously required selective AI assist becomes viable as a primary production method.

- Advertisers building audience-segmented version matrices: A campaign that previously required 5 variants (budget-constrained) could justify 40+ localized, audience-specific versions.

Credibility Rating: Medium

The technical mechanisms (distillation, inference optimization) are real and widely used in AI model development. The specific "1/8th" figure is a strong quantitative claim that needs independent verification — it implies precise cost benchmarking against a known Seedance 2.0 baseline. Plausible direction, unverified magnitude.

4.1 Seedance 3.0 vs Seedance 2.0: Predicted Comparison Table

| Dimension | Seedance 2.0 (Known) | Seedance 3.0 (Predicted) | What It Means If True | Still Needs Verification |

|---|---|---|---|---|

| Max video length | Short-form clips (~10–30s) | 10+ minutes continuous | Opens feature film / series production | Whether quality holds end-to-end |

| Narrative consistency | Per-clip basis | Narrative Memory Chain across full video | Characters/scenes stay coherent over time | Long-duration character drift tests |

| Voiceover pipeline | Post-generation TTS + alignment | End-to-end joint audio-visual training | Natural, in-sync emotional delivery | Side-by-side with professional voice actors |

| Multilingual support | Audio-video sync improvement | Native CN/EN/JP/KR with emotional prosody | True multilingual production without re-dubbing | Language-specific rhythm accuracy |

| Directing tools | Prompt-based | Storyboard input + real-time commands | Shot-precise cinematic control | Reliability on complex multi-shot scripts |

| Inference cost | Baseline | ~1/8th of 2.0 | Democratizes iteration and bulk production | Independent cost benchmarking needed |

| Output quality standard | Enhanced photorealism | Not yet reported | — | Full visual fidelity comparison needed |

All Seedance 3.0 figures are based on community predictions as of 2026. No figures have been officially confirmed.

Seedance 3.0 Could Redefine What One Person Can Create — Here's What to Watch For

The four predictions covered here — long-form continuous generation via Narrative Memory Chain, native multilingual emotional voiceover, cinema-grade directing tools, and a dramatic cost reduction — each represent a meaningful step change, not just an incremental improvement over Seedance 2.0.

If even half of these predictions hold up under real-world testing, the implications for independent creators, brand production teams, and studios are significant. AI video generation would stop being a tool for short-form content and start functioning as a genuine production medium for narrative work — what many are calling the dawn of the true AI feature film generator era.

That's still a conditional. Predictions sourced from community forums — however specific and technically coherent — need verification against actual output. When Seedance 3.0 drops, return to the checklist in section 2.2: character consistency, prop continuity, dialogue sync, quality degradation over time, and camera direction accuracy. Those five tests will tell you more than any feature announcement.

Bookmark this article for updates as more information becomes available. For creators and brands who want to be ready the moment Seedance 3.0 launches, platforms like Designkit — already integrated with Seedance — offer a practical starting point to explore what AI-powered video creation looks like in production today.

Frequently Asked Questions

When is Seedance 3.0 expected to release?

Will Seedance 3.0 really support 10-minute continuous video generation?

This is one of the most consistently cited predictions in community forums, attributed to sources described as close to the project. Internal tests reportedly reached 18 minutes without quality degradation. Until the model is publicly available and independently tested, treat this as a high-interest prediction, not a confirmed specification.

How much will Seedance 3.0 cost compared to Seedance 2.0?

Community predictions suggest an inference cost reduction to approximately 1/8th of Seedance 2.0's computational expense, attributed to distillation and inference optimization. This figure has not been independently verified and should be evaluated against official pricing when announced.

Is Seedance 3.0 officially announced yet?

No. As of the time of writing, Seedance 3.0 has not been officially announced. This article is a community predictions roundup and credibility analysis — not coverage of a confirmed product launch.

You May Also Like

Product Photography Ideas: Creative Tips & Techniques for Better Shots

Product Photography Pricing 2026: Full Cost Guide by Type

Google Veo 4 Explained: Features, AI Video Creation & What’s New

How to Try On Clothes Online with AI for Better Fit & Style

Virtual Nose Ring Try On Online Before Your Piercing

White Background Product Photography Guide & Tips 2026

Product Photography Starter Package: Gear Checklist 2026

How to Try Makeup Online with AI and Virtual Makeup Try-On Apps (2026)

How to Use Virtual Earring Try-On for a Flattering Look in 2026

How to Try On Engagement Rings Online with AI in 2026

Scale Your Jewelry Brand with Virtual Jewelry Try-On Solutions

How to Try Hairstyles on Your Face Online (Step-by-Step Guide)

Best Virtual Hairstyle Try-On Tools Online for Realistic Previews 2026

How to Use a Free AI Hairstyle Generator to Find Your Perfect Look

Virtual Hair Color Try-On Guide for Every Skin Tone (2026)

Virtual Wedding Dress Try-On Online for Brides in 2026

How to Choose the Right Sunglasses for Your Face Shape Online

How Accurate Is Virtual Glasses Try-On? AI vs AR Compared

Best Virtual Try-On Glasses Apps in 2026 (Free & Paid Tools)

How AI Virtual Try-On Is Transforming Eyewear Ecommerce

5 Best Virtual Try-On Glasses Tools for Ecommerce and Consumers 2026

How to Try On Glasses Online with AI in 2026 (Easy Guide)

How to Create Jewelry Photos with AI Models That Drive Sales in 2026

Shopify Product Photography Guide with Tips & Setup

AI-Generated Fashion Models: Technology, Brand Adoption & Trends

How to Batch Generate 100+ AI Clothing Model Photos Fast

7 Best AI Fashion Model Generators for Ecommerce in 2026

Amazon Main Image Requirements: Size, Guidelines & Tips

Shopify Image Size 2026: Complete Guide and Tips for Better Product Display

Studio Product Photography vs AI Product Photography in 2026

7 Product Photography Mistakes That Hurt Ecommerce Sales

AI Clothing Model Photos for E-Commerce: Platform Rules & Best Practices

How AI Virtual Try-On Helps Fashion Brands Boost Sales & Reduce Returns

AI Fashion Models vs Real Models: Which Is Better for Brands?

Best Free AI Fashion Model Generators for Real Product Photos

Virtual Outfit Try-On Guide: Plan Outfits Before You Buy

GPT Image 2 Review: Features, Prompts & E-commerce Use Cases

What Is an AI Clothing Model Generator? Full Guide for Sellers

Change Outfit Colors Online for Outfit Preview with AI Clothes Color Changer

Virtual Try-On Technology in 2026: How It Works & Key Trends

How to Create Amazon Infographics: Boost Sales with Compelling Visuals

10 Best Virtual Try-On Apps & Tools for Online Shopping in 2026

How to Create AI Fashion Models: Complete Step-by-Step Guide

How to Use AI Fashion Models for E-Commerce Product Photos: A Complete 2026 Guide

How to Virtually Try On Clothes Online: A Complete Guide

How to Create Outdoor Product Scenes Without a Photoshoot Using AI

GPT-Image-2 for Ecommerce Product Images: What It Means for Online Stores

Optimize Etsy Images for Mobile: Listing Photos That Convert

Amazon 7-Image Listing Strategy: Boost Sales by Optimizing Every Image

Happy Horse 1.0: The AI Video Model E-Commerce Sellers Need to Know About

Etsy Thumbnail Optimization: Keep Your Product Fully Visible

AI UGC for Ecommerce in 2026: Text-to-Video, Image-to-Video & Reference Replication Guide

The Etsy Thumbnail Formula That Drives More Clicks and Sales

HappyHorse AI Review: Features, Benchmarks & How to Use It

How to Create Pro Amazon Listing Photos Without a Studio

10 High-Converting Lifestyle Product Images for Amazon Listings

How to Automate Your E-commerce Visuals with OpenClaw Workflow

Top 5 AI Tools to Generate Amazon Product Listing Images in 2026

How to Turn One Photo into 10 Etsy Listing Images Fast: Batch Workflow

Etsy Listing Photo Size Guide (2026): Mobile, Desktop & Shop View Specs

Turn White Background Images into Lifestyle Amazon Listings

Fix Etsy Image Cropping: Optimize Listing Photos for Every Device

Amazon Listing Images: Essential Shot List & Optimization Guide

How to Create Lifestyle Product Images Without a Studio

Seedance 3.0 Predictions: Will AI Video Enter the Feature Film Era?

Grok AI vs ChatGPT: Features, Pricing & Best Choice (2026)

How to Use Grok AI: Features, Tips & Best Prompts (2026 Guide)

Sora Is Shutting Down? Best AI Video Alternatives for Creators in 2026

Lifestyle Product Photography Trends 2026: Authentic Looks at Scale

How to Master Lifestyle Product Photography in 2026

Amazon Listing Images Guide 2026: 7-Slot Strategy & Requirements

360 Product Photography Guide 2026: Setup, Shoot and Workflow

Beginner's Guide to Generate Product Photos from Different Angles

AI Product Photography: Create Ecommerce Images Without a Photoshoot

How to Create Realistic AI Product Photos: Step-by-Step Pro Guide

Keep AI Product Images Consistent Across SKUs: Lighting, Color, Background

Seedance 2.0 for Ecommerce: Create AI Product Videos in Minutes

Seedance 2.0 Review: Features, Improvements, Pricing & How It Works (2026)

How to Scale E-commerce with a Product Image Generator

How to Use AI for Spring Product Photography: 2026 Amazon Guide

How to Create a Budget Home Product Photography Setup with AI

How to Achieve Professional Product Photography Standards in 2026

How to Take Product Photos with Phone: Pro Guide for Amazon & AI

Best AI Product Image Generator 2026: Top Tools for Amazon Sales

Amazon Photography Service vs. AI Generator: Best Choice for Sales

What Is Product Photography? Angles, Lighting & Editing Guide

Amazon Spring Sale 2026: Create High-Converting Listing Images (Guide)

AI Agents Transform Amazon Product Photography in 2026

Amazon Photoshoot in 2026: Do You Still Need a Studio?

11 Must-Have Tools for Amazon Product Photography in 2026

2026 Amazon Image Trends Shaping Seller Success and Conversions

Amazon Product Photography Requirements & Best Practices 2026

Make every product image ready to sell

Designkit is an all-in-one AI platform for ecommerce visuals. Create product photos, AI videos, virtual try-ons, and Amazon listing images in seconds. Generate HD backgrounds, batch edit photos, and scale your brand with studio-quality content.